Optimizing Images for AI Search Results

Showing up in answer engines is all about having the right content to answer the user's question—and that content includes images

Answer engines generate direct answers and use images to support those answers, not just decorate them. Visuals turn an answer into a multimodal experience that feels clearer, more credible, and more helpful. Understanding how answer engines discover, evaluate, and reuse images is now essential for brands that want visibility in AI-generated results.

Below is a practical, plain‑language breakdown of how answer engines choose images, and what you can do to make your visuals more likely to appear inside AI answers.

SEO vs. answer engines: what’s changed?

For years, image optimization was about helping search engines find and rank your visuals. Now, answer engines don’t just index images; they actively surface them inside generated responses. That shift fundamentally changes what it means to optimize your images.

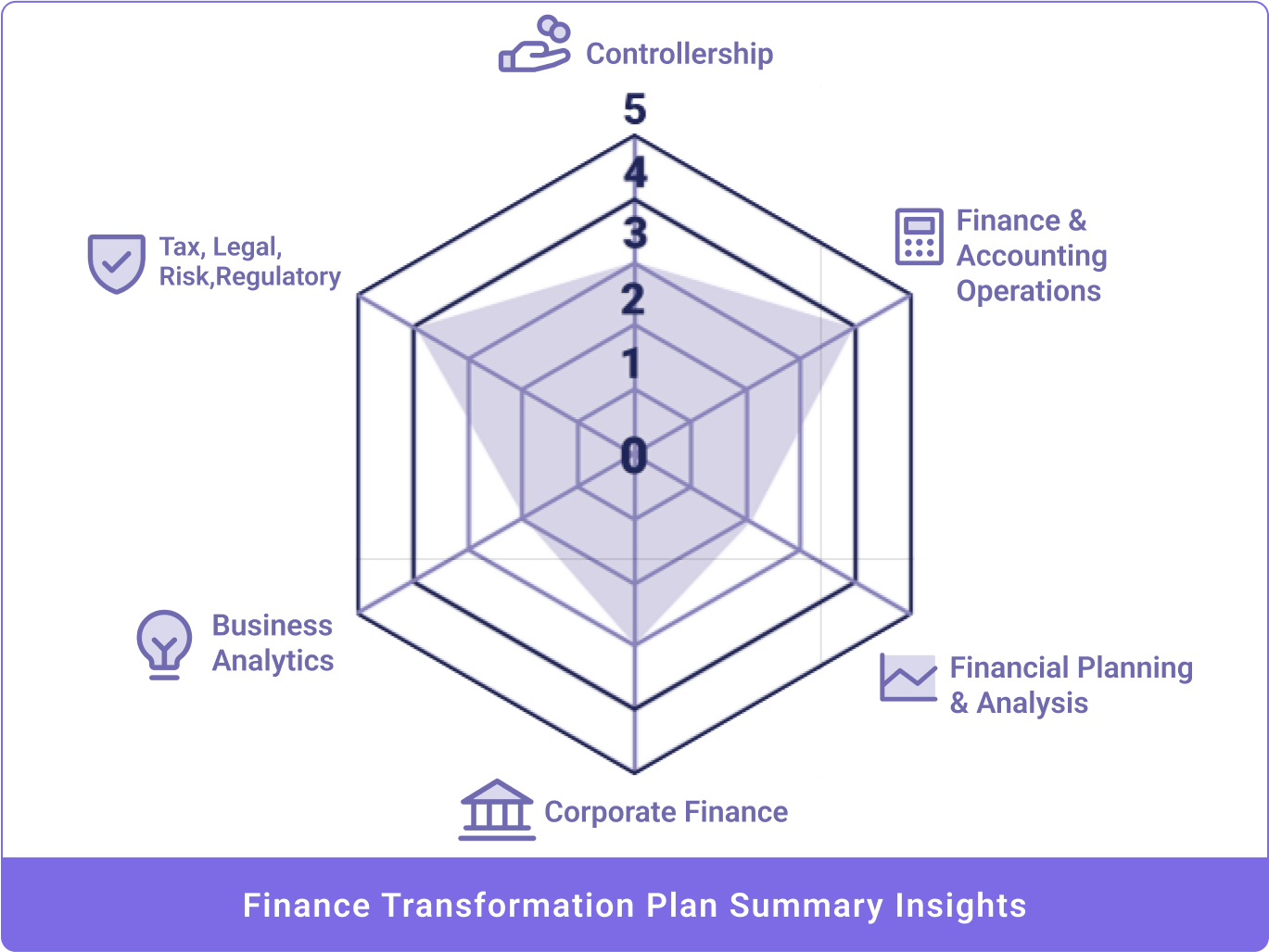

Traditional SEO focuses on ranking assets. Answer engines focus on selecting assets that are safe, accurate, and useful enough to embed directly into an answer. As a result, image signals are weighted very differently. Here's a breakdown of how each signal matters for SEO and AEO.

|

Signal |

Importance for SEO |

Importance for AI (AEO) |

Strategic Note |

|---|---|---|---|

|

Alt Text |

High: Primary way search engines "see" the image. |

Critical: Essential for LLM grounding and accessibility. |

For AI, be more descriptive; include context, not just keywords. |

|

File Names |

Moderate: Helps with image search ranking. |

Moderate: Provides a secondary semantic signal. |

Use blue-origami-crane.jpg instead of IMG_123.jpg. |

|

Captions & Context |

Moderate: Helps with page relevance. |

High: Provides the "semantic anchor" the AI uses to verify the image. |

AI uses surrounding text to determine if the image is a "proof" of the claim. |

|

Structured Data |

Optional: Helps with "Rich Results" (e.g., product badges). |

High: Explicitly defines the image's relationship to the content. |

Use Schema.org ImageObject to define author and license. |

|

Original Imagery |

Low: Stock photos rank fine. |

High: Major trust and "uniqueness" signal for LLMs. |

AI models are increasingly trained to prefer "evidence" over generic stock. |

|

Visual Clarity |

Moderate: Better for UX/Conversion. |

High: Necessary for computer vision & reuse. |

High contrast and clear subjects help AI "parse" the image components. |

Once you understand the shift from ranking images to surfacing them, it becomes easier to see how answer engines determine if and when an image belongs in a response at all.

When do answer engines decide to show an image?

The first step is simple: the engine decides whether a visual will improve understanding.

Answer engines provide multimodal responses that assist the user in getting a thorough answer to their question, which often means surfacing an image to help.

If a user asks a question like “How do I fix a leaky faucet?”, the system recognizes that diagrams or step‑by‑step visuals could add clarity and will look for those types of images to incude. For abstract or purely factual questions, an image may not be used at all.

Maybe you’re thinking, “We only use stock images” or “We don’t sell online, so images don’t matter.” But consider this: charts, graphs, tables, and maps are all images, which widens the range of images that may get surfaced in answer engines. There are ways for most, if not all, businesses, to improve their chances of showing up in answer engine results by including and optimizing images.

For example, businesses that operate within a specific geographical area should include a service area map on their website, and unique business offers or methods should be supported by logos and flowcharts.

Think about what visuals would help tell your business's story. A pediatric dental practice might want photos of a warm, welcoming lobby to put nervous kids and parents at ease. A hotel could showcase an accessible entrance so guests know what to expect before they arrive. If you run an HVAC or home services company, images of your uniformed, professional staff or branded fleet of vehicles can reassure customers that the right people are showing up at their door.

A picture really is worth a thousand words — so consider what images would best represent your business when it surfaces in generative search results.

How answer engines choose images

When an answer engine includes an image, it’s making a deliberate decision. That image isn’t there to fill space or add visual interest. It’s there to help explain, confirm, or reinforce the answer being generated. Because that image becomes part of the response itself, the selection process is far more intentional than traditional image search.

Answer engines don’t just “grab” an image. They follow a multi-step process designed to surface visuals that are accurate, trustworthy, and tightly aligned with user intent. Each step reduces risk, improves clarity, and ensures the image supports the answer rather than distracts from it.

Image discovery

The engine first looks for images from high-quality pages that already support the text answer. These pages tend to be authoritative, well-structured, and clearly written.

Images are represented as vectors, or mathematical summaries of what the image contains. This allows the system to find visuals that are semantically related to the question—even when the wording doesn’t match exactly.

Multimodal ranking

Once an answer engine has gathered a pool of possible images, the next step is deciding which ones actually belong in the response. At this stage, the engine isn’t just asking whether an image is related. It’s evaluating whether the image truly supports the meaning of the answer being generated.

To do this, the engine compares candidate images to the user’s question using models that understand both language and visuals. The goal is to ensure that what the image shows aligns with what the answer says.

At this stage, the engine evaluates:

- Whether the image actually shows what the text describes

- How closely the image’s meaning matches the user’s intent

- Whether the image helps explain or confirm the answer

Only images that pass this relevance test move forward.

Trust and usability checks

After relevance is confirmed, answer engines apply a final set of filters focused on safety, quality, and user experience. These checks help determine whether an image can be confidently surfaced inside an AI-generated response.

Quality signals include:

- Image clarity and resolution

- Aspect ratio and usability across different interfaces

- Source authority and overall site credibility

Images from trusted domains with clear supporting content are far more likely to be reused. At this point, the engine isn’t just selecting the best-looking image. It’s selecting the image it can trust to represent the answer accurately.

Matching the image to the answer

To select the right image, answer engines need more than keywords or filenames. They must determine whether an image can help answer the question. This is where large language models move beyond traditional search and begin interpreting visuals and text as part of the same system.

Answer engines translate both text and images into a shared mathematical space, often called a multimodal embedding.

In simple terms:

- The question is converted into a representation of its meaning

- Images across the web are scanned for similar meaning

- The engine compares them to find the closest match

This allows answer engines to select images that fit user intent even when the exact wording differs. Trust signals such as site authority and structured data help determine which images are appropriate to surface inside AI-generated responses.

Interpreting context and intent

Finding a relevant image is only part of the process. While machines can identify objects and patterns, they can’t always determine why an image exists or how it should be used. That meaning comes from context.

Answer engines evaluate the text and structure surrounding an image to understand its purpose and intent within the page. This context helps the system confirm that the image truly supports the generated answer, rather than simply being loosely translated.

Key signals include:

- Alt text that clearly describes what the image shows

- Captions and headings that connect the image to nearby content

- Structured data (schema markup) that defines the image’s subject, creator, and usage

- Proximity, or the content that appears immediately before and after the image

When these signals are clear and consistent, they reduce ambiguity and increase the engine’s confidence that an image is accurate, relevant, and safe to reuse inside an AI-generated response.

Image freshness: how old is too old?

Outdated visuals get deprioritized because they no longer match how answer engines understand the current world. When an image looks inaccurate or out of date, it introduces risk, and models avoid using it. LLMs hate it when they’re not right.

Recency matters most when:

- Your industry changes quickly

- Accuracy and trust are critical

- The product or service evolves over time

- The brand’s visual identity has changed

Image freshness plays the biggest role in industries such as commerce, home services, healthcare, banking, finance, and bars/restaurants. In these categories, current photos show that a business is active, open, reliable, and the website is updated regularly.

How to make your images answer‑engine ready

Now you know how the LLMs are selecting images to use in their answers, how can you make sure your images are fully optimized to show up in the right results?

Here’s how to adapt from traditional image optimization to optimizing for answer engines.

1. Go beyond basic alt text

Alt text is no longer just an accessibility requirement. It’s a confirmation signal for AI.

Strong alt text is:

- Literal: describes exactly what’s visible

- Specific: materials, type, purpose

- Unambiguous: clear subject, no fluff

- Entity‑rich: brands, products, or tools when relevant

- Contextual: industry or use case when helpful

Example

- Weak alt text: alt="blue sneakers"

- AEO‑ready alt text: alt="Blue lightweight marathon running sneakers with carbon‑fiber plates"

Use descriptive file names as well. marathon‑racing‑sneakers‑blue.jpg is far more useful than IMG_902.jpg.

2. Use structured data to reduce guesswork

Structured data is one of the strongest technical signals you can provide.

Schema markup, such as ImageObject or Product, tells answer engines:

- What the image represents

- Who created it

- How it should be interpreted

This reduces uncertainty and increases the likelihood that an image can be safely used in an AI response

3. Anchor images to strong text

Answer engines don’t pull random visuals. They look for images that are tightly connected to high‑quality nearby content.

Best practices include:

- Placing key images near the top of the page, directly after a clear answer

- Using captions that reinforce the main point

- Ensuring the surrounding text uses the same language you want associated with the image

In most cases, engines analyze roughly 50–100 words around an image to determine relevance, so place images wisely.

4. Avoid stock photos when possible

Answer engines prefer images that explain something, not just illustrate a mood.

Higher‑performing visuals include:

- Diagrams

- Charts

- Labeled photos

- Step‑by‑step instructional images

Custom, information‑dense visuals are far more likely to be cited than generic stock photography. Clarity matters! High contrast, simple backgrounds, and clear focal points make images easier for AI systems to interpret.

Optimizing your site for AI engines with Perrill

Images are no longer supporting assets. They are evidence.

If your product, service, environment, or brand has changed, your images must change too. Updated visuals produce more accurate embeddings, stronger trust signals, and a higher likelihood of being surfaced within AI‑generated answers. For AI visibility, visual freshness isn’t a nice‑to‑have; it’s a requirement.

As answer engines grow in popularity, making sure your brand is well-represented in AI results is crucial to capturing leads, sales, and revenue. If you're ready to start preparing your business to show up in generative search engines, our GEO team will work with your team to ensure you're showing up wherever your audience is looking for you. Start optimizing your business for AI visibility—reach out to get started.

Jen Jones

Author

Jen Jones

Categories

Date

Explore with AI

Join Our Newsletter

Choosing the Right Agency for Traditional and AI-Powered Search Optimization

Preparing Your E-Commerce Site for LLM Checkout: SEO, AEO, and GEO Readiness